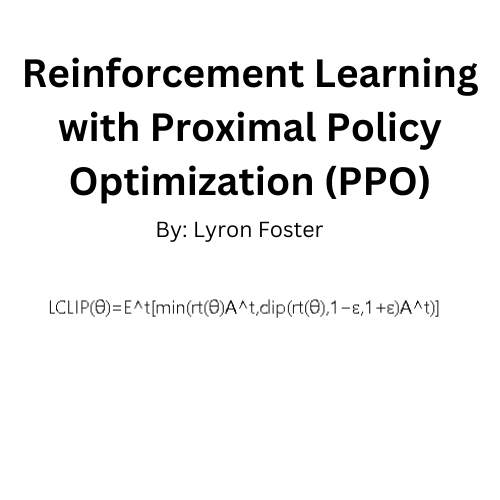

Reinforcement Learning (RL) has been a popular topic in the AI community, especially with its potential in training agents to perform tasks in environments where the correct decision isn’t always obvious. One of the most widely used algorithms in RL is Proximal Policy Optimization (PPO). In this tutorial, we’ll discuss its foundational concepts and implement it from scratch.

Traditional policy gradient methods often face challenges in terms of convergence and stability. PPO was introduced as a more stable and robust alternative. PPO’s key idea is to limit the change in policy at each update, ensuring that the new policy isn’t too different from the old one.

Let’s get up to speed

Before diving in, let’s get familiar with some concepts:

- Policy: The strategy an agent employs to determine the next action based on the current state.

- Advantage Function: Indicates how much better an action is compared to the average action at a particular state.

- Objective Function: For PPO, this function helps in updating the policy in the direction of better performance while ensuring changes aren’t too drastic.

PPO Algorithm

PPO’s Objective Function:

Let’s define:

L^CLIP(θ)as the PPO objective we want to maximize.r_t(θ)as the ratio of the probability under the current policy to the probability under the old policy for the action taken at time t.A^_tas the estimated advantage at time t.εas a small value (typically 0.2) which limits the change in the policy.

The objective function is formulated as:

L^CLIP(θ) = Expected value over time [ min( r_t(θ) * A^_t , clip(r_t(θ), 1-ε, 1+ε) * A^_t ) ]

In simpler terms:

- Calculate the expected value (or average) over all time steps.

- For each time step, take the minimum of two values:

- The product of the ratio

r_t(θ)and the advantageA^_t. - The product of the clipped ratio (restricted between

1-εand1+ε) and the advantageA^_t.

The objective ensures that we don’t change the policy too drastically (hence the clipping) while still trying to improve it (using the advantage function).

Implementation

First, let’s define some preliminary code and imports:

import numpy as np

import tensorflow as tf

class PolicyNetwork(tf.keras.Model):

def __init__(self, n_actions):

super(PolicyNetwork, self).__init__()

self.fc1 = tf.keras.layers.Dense(128, activation='relu')

self.fc2 = tf.keras.layers.Dense(128, activation='relu')

self.out = tf.keras.layers.Dense(n_actions, activation='softmax')

def call(self, x):

x = self.fc1(x)

x = self.fc2(x)

return self.out(x)

The policy network outputs a probability distribution over actions.

Now, the main PPO update:

def ppo_update(policy, states, actions, advantages, old_probs, epochs=10, clip_epsilon=0.2):

for _ in range(epochs):

with tf.GradientTape() as tape:

probs = policy(states)

probs = tf.gather(probs, actions, batch_dims=1)

old_probs = tf.gather(old_probs, actions, batch_dims=1)

r = probs / (old_probs + 1e-10)

loss = -tf.reduce_mean(tf.minimum(

r * advantages,

tf.clip_by_value(r, 1-clip_epsilon, 1+clip_epsilon) * advantages

))

grads = tape.gradient(loss, policy.trainable_variables)

optimizer.apply_gradients(zip(grads, policy.trainable_variables))

To train an agent in a complex environment, you might consider using the OpenAI Gym. Here’s a rough skeleton:

import gym

env = gym.make('Your-Environment-Name-Here')

policy = PolicyNetwork(env.action_space.n)

optimizer = tf.keras.optimizers.Adam(learning_rate=0.001)

for i_episode in range(1000): # Train for 1000 episodes

observation = env.reset()

done = False

while not done:

action_probabilities = policy(observation)

action = np.random.choice(env.action_space.n, p=action_probabilities.numpy())

next_observation, reward, done, _ = env.step(action)

# Calculate advantage, old_probs, etc.

# ...

ppo_update(policy, states, actions, advantages, old_probs)

observation = next_observation

PPO is an effective algorithm for training agents in various environments. While the above is a simplistic overview, it captures the essence of PPO. For more intricate environments, consider using additional techniques like normalization, entropy regularization, and more sophisticated neural network architectures.

Lyron Foster is a Hawai’i based African American Author, Musician, Actor, Blogger, Philanthropist and Multinational Serial Tech Entrepreneur.