Elasticsearch is a widely used search engine and analytics tool that allows users to search, analyze, and visualize large amounts of data in real-time. However, like any technology, Elasticsearch can encounter problems that can hinder its effectiveness. In this article, we will discuss five common Elasticsearch problems and their solutions for effective deployment.

1. Memory Issues: Elasticsearch uses a lot of memory, and if not managed properly, it can lead to performance issues. One solution to this problem is to increase the amount of heap memory allocated to Elasticsearch. You can do this by editing the Elasticsearch configuration file and increasing the value of the “Xmx” parameter.

2. Slow Searches: Slow searches can be caused by a number of factors, including improper indexing, overloaded hardware, and inefficient queries. To speed up searches, you can optimize your queries by using filters instead of queries, disabling unnecessary features, and properly configuring the indexing settings.

3. Node Failure: Elasticsearch is a distributed system, which means that it is made up of multiple nodes. If one node fails, it can affect the entire system. To prevent node failure, you can increase the number of nodes in your cluster, use a load balancer to distribute traffic evenly, and regularly monitor your system for any issues.

4. Data Loss: Data loss is a serious issue that can occur if Elasticsearch is not properly configured. To prevent data loss, you should regularly back up your data, use replication to ensure that data is stored on multiple nodes, and enable snapshot and restore functionality.

5. Security Issues: Elasticsearch contains sensitive data, making it a target for cyberattacks. To protect your system from security threats, you should use strong authentication and authorization methods, enable SSL encryption, and regularly monitor your system for any suspicious activity.

In conclusion, Elasticsearch is a powerful tool that can help you analyze and visualize large amounts of data in real-time. However, to ensure effective deployment, it is important to address common problems such as memory issues, slow searches, node failure, data loss, and security issues. By implementing the solutions discussed in this article, you can improve the performance and security of your Elasticsearch deployment.

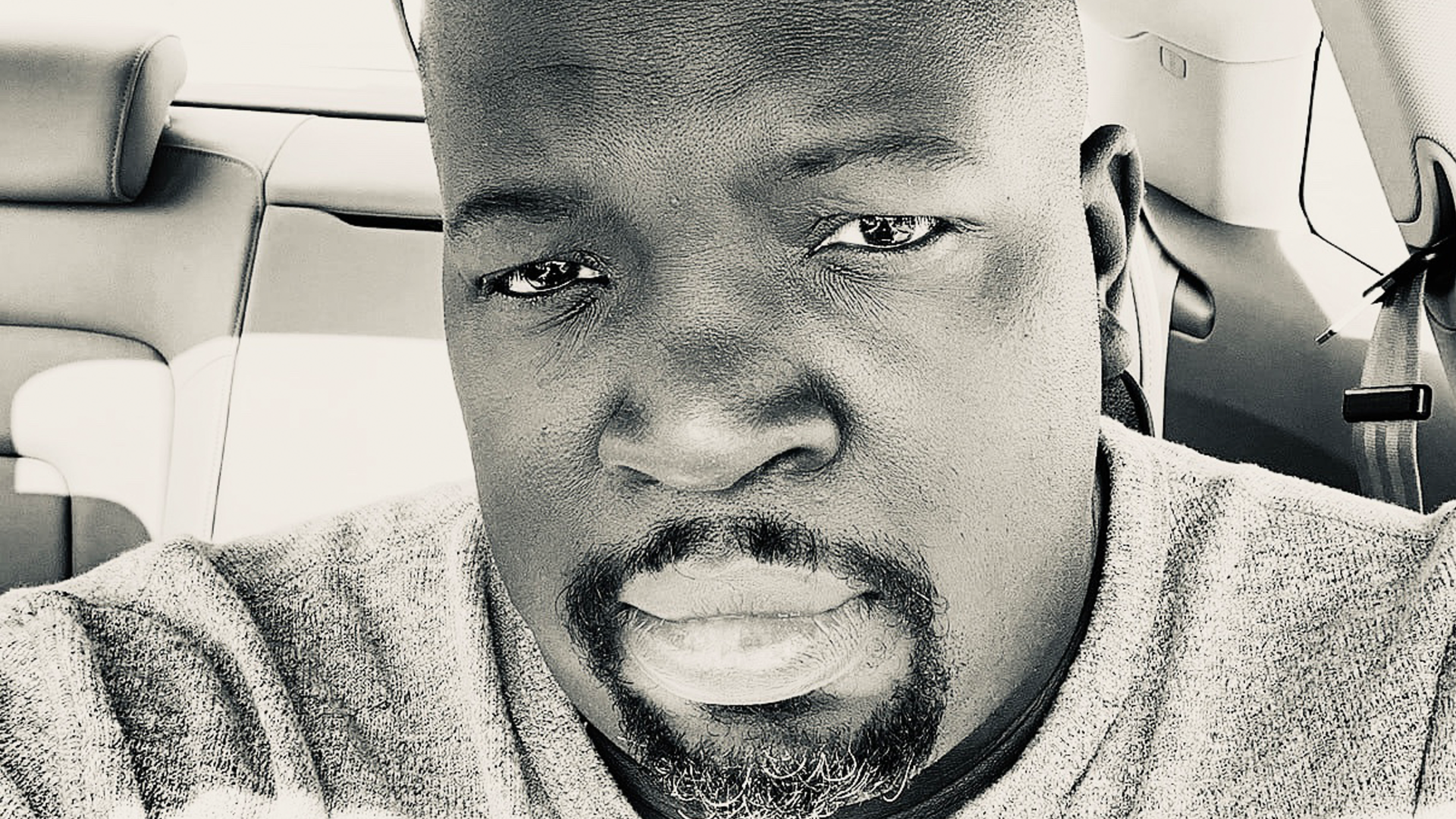

Lyron Foster is a Hawai’i based African American Author, Musician, Actor, Blogger, Philanthropist and Multinational Serial Tech Entrepreneur.